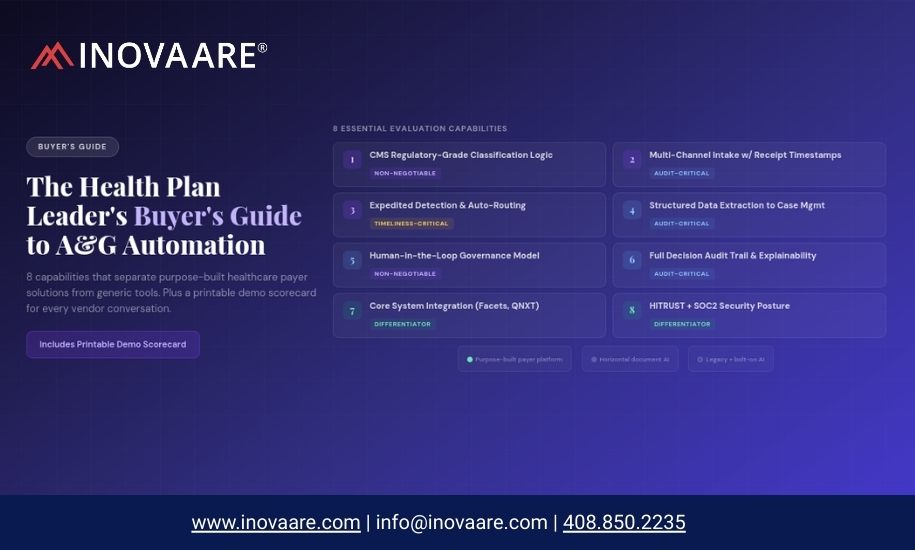

You’ve decided manual A&G intake is no longer sustainable. Now you’re evaluating platforms. This guide gives you the 8 capabilities that distinguish purpose-built healthcare payer solutions from generic tools — plus a printable demo scorecard to bring to every vendor conversation.

Health plan compliance and operations leaders who reach the platform evaluation stage have already done the hard work: they’ve made the internal case that manual A&G intake is a structural liability, they’ve aligned operations, compliance, and IT, and they’ve gotten executive support to move forward. The question at this stage is which platform to choose — and how to structure the evaluation so that the right differentiators surface, not just the most polished product demos.

The A&G intake automation market includes genuine purpose-built solutions, horizontal document AI tools that have been adapted for healthcare, and legacy case management platforms that have bolted on AI modules. They are not equivalent. The evaluation criteria below will surface the differences quickly.

This guide is written for health plan compliance directors, VP-level A&G and Member Services leaders, and the COOs and CIOs who are evaluating or approving their organizations’ intake automation investments. It assumes you have completed the business case phase and are comparing specific platforms. If you are still building the internal case, see our companion guide on the operational and compliance cost of manual intake.

The 8 Capabilities That Separate Purpose-Built From Generic

CMS Regulatory-Grade Classification Logic

Non-NegotiableThe most important differentiator, and the most commonly obscured. Purpose-built A&G intake platforms embed CMS regulatory definitions — from 42 CFR 422/423 Subpart M and the CMS ODAG/CDAG protocol — directly into their classification logic. They distinguish appeals from provider disputes, grievances from inquiries, and expedited from standard based on regulatory criteria, not surface document language.

Generic document AI tools classify by document type patterns — identifying “complaint letters” or “appeal requests” based on language modeling, not regulatory law. In a CMS audit context, this distinction produces systematic classification errors that generate ODAG findings.

Multi-Channel Intake with Receipt Timestamp Accuracy

Audit-CriticalCMS timeliness is measured from the moment the plan received the request. A platform that captures receipt timestamps only when a case is created in the system — rather than when the document arrived — produces timeliness records that are systematically inaccurate. This creates ODAG timeliness findings regardless of how good the actual review process is.

Compliant platforms capture the receipt timestamp at document arrival across all intake channels: fax, mail, email, web portal, and EDI. The case creation timestamp and the receipt timestamp are recorded separately and both are visible in the audit log.

Expedited Detection and Automatic Routing

Timeliness-CriticalExpedited appeals and coverage determinations have 24-72 hour windows. Missing an expedited indicator because a document arrived in an ambiguous format, or because a fatigued staff member didn’t recognize the language, is one of the most direct paths to an ODAG timeliness finding. Purpose-built platforms detect expedited indicators automatically — from explicit expedited language, from clinical urgency signals, and from provider-submitted forms that trigger expedited processing under CMS rules.

Structured Case Record Generation with Complete Data Extraction

Operations-CriticalThe intake agent’s job isn’t just to classify the document — it’s to produce a complete case record that a reviewer can begin work on immediately. That means extracting the issue description, claim number, event date, member ID, respondent, issue type, AOR information, and case sub-category from the incoming document and auto-populating the case record. Reviewers who receive a shell case with only a classification label and have to re-read the source document for data are getting less than half the value of intake automation.

Full Decision Audit Logging with CMS-Ready Evidence Export

Audit-CriticalCMS program auditors will request case documentation including evidence of how cases were received, classified, and processed. A platform that produces case records but not classification audit logs — showing the criteria applied, the confidence level, and the document evidence used — is not defensible in a CMS audit context. The audit log must be available at the case level, not just in aggregate reporting.

Even more critically: the platform should support the production of a CMS-ready universe export that reflects accurate case types, receipt timestamps, and case counts — because universe accuracy is tested directly in ODAG and CDAG audits.

Human Review Integration with Structured Handoff Workflow

Compliance ArchitectureThe intake agent creates the case and routes it — but a human reviewer makes the determination. The platform must structurally require human review before any determination affecting member benefits is issued. This isn’t a policy requirement layered on top of the AI — it should be the architectural design of the system. The reviewer should receive a complete, classified, enriched case with all extracted data visible, so their time is spent on clinical and regulatory judgment rather than data entry.

Platforms that allow AI agents to issue correspondence or close cases without a human reviewer in the chain are not compliant with CMS requirements for MA plans, regardless of how they are marketed.

CMS Guidance Synchronization with Change-Controlled Updates

Long-Term ComplianceCMS updates Parts C and D A&G guidance regularly. The November 2024 guidance update added grievances to the requirements for coverage determinations and appeals, clarified provider versus enrollee filing rights, and modified the standards for grievance notification. A platform whose classification logic is not updated in synchronization with CMS guidance changes will systematically misclassify cases under outdated criteria.

More importantly: the update process must be change-controlled — meaning the plan knows when a guidance change is implemented, can validate the new logic before it goes live, and has a documented record of when each regulatory update was incorporated.

Integration with A&G Case Management and Universe Scrubber

Platform ArchitectureIntake automation that operates as a standalone module produces classified cases that still require manual transfer to the plan’s case management system and universe tracking. The full value of intake automation is realized when the intake agent creates a case directly in the case management platform — with the universe record, timeliness clock, and SLA tracking all initiated simultaneously at receipt.

Plans that are preparing for CMS audits also need their intake data connected to their CMS Universe Scrubber, so that classification errors detected in the universe can be traced back to the intake record and corrected before submission. And Star Ratings appeal measures require accurate universe data that flows from intake through to IRE reporting — a connection that only works if intake, case management, and universe tracking are on the same data platform.

Platform Comparison: Purpose-Built vs. Generic

| Evaluation Criterion | ❌ Generic / Horizontal Tool | ✓ Purpose-Built for Healthcare Payers |

|---|---|---|

| Classification logic basis | General NLP document type models; configured for healthcare | Embedded 42 CFR 422/423 regulatory definitions; built for CMS compliance |

| Receipt timestamp capture | Captured at case creation; requires manual process to document actual receipt | Captured at document arrival across all channels; separate from case creation timestamp |

| Expedited detection | Keyword matching; misses clinical and provider-form expedited indicators | Multi-signal detection including clinical urgency and provider-form triggers |

| Audit log granularity | Classification score or confidence rating; reasoning not case-level visible | Full classification reasoning with criteria applied, document evidence, and confidence — at case level |

| Universe export | Case management data export; receipt timestamps may be system timestamps | CMS-formatted universe export with accurate receipt timestamps and classification audit trail |

| CMS guidance updates | Model retraining cycle; timing and logic changes are vendor-controlled | Change-controlled updates synchronized with CMS guidance; plan validates before go-live |

| Platform integration | API to separate case management platform; universe tracking is a third system | Native integration: intake, case management, universe scrubber, and Star tracking on one data model |

The Demo Scorecard: Bring This to Every Vendor Conversation

Use this scorecard during vendor demos to evaluate each platform consistently. Ask the vendor to demonstrate each item — not to describe it. A platform that cannot demonstrate these capabilities live in a demo is unlikely to deliver them in production.

A&G Intake Platform Demo Scorecard

Rate each item: ✓ Demonstrated Live | ~ Described Only | ✕ Not Addressed

Ask every vendor to process a real (de-identified) or representative test document from your plan’s intake queue — fax preferred — and show you the output in real time. A platform that performs well on prepared demo content but struggles with a fax-received multi-page complaint letter is showing you exactly how it will perform in your production environment.

Pricing Structures to Evaluate

A&G intake automation platforms are priced in several models, each with different implications for health plan budget planning. Per-seat pricing — common in legacy case management platforms — creates a cost structure that scales with headcount rather than with value delivered, and often produces resistance from IT procurement when AI automation is expected to reduce FTE count. The pricing models that tend to work better for MA payer AI include per-transaction pricing, per-member-per-month (PMPM) for specific workflows, and outcome-based pricing tied to cost avoided or cycle time reduction.

Outcome-based pricing is increasingly favored by health plan CFOs and COOs because it aligns vendor incentive with plan value and makes ROI calculation straightforward: the plan pays based on the measurable outcomes (reduction in timeliness findings, reduction in intake labor hours, improvement in classification accuracy) rather than on a SaaS license that exists independent of results.

Model ROI against four simultaneous cost centers: FTE hour reduction in intake; timeliness finding risk reduction (measured against civil monetary penalty exposure and audit remediation costs); Star Ratings data quality improvement (measured against the cost of a half-star downgrade); and retention and recruitment cost savings from removing the highest-burnout activity in the A&G department. Plans that model against only FTE hours consistently underestimate the business case by a factor of 3-5x.

Ready to Put Inovaare Through the Evaluation?

We welcome the demo scorecard. Inovaare’s A&G intake agents demonstrate live classification, real-time audit logs, receipt timestamp accuracy, and universe export integrity — with de-identified test documents from your own intake queue if you’d like. Request a structured evaluation demo and bring your scorecard.

Request Evaluation Demo Explore AI AgentsSources: CMS ODAG Audit Protocol (December 2024); CMS Parts C and D Enrollee Grievances Guidance (November 2024 update); CMS 2024 Part C and Part D Program Audit and Enforcement Report; ATTAC Consulting Group ODAG audit analysis (2025); Health Affairs AI in utilization review analysis (January 2026).